The New Threat Frontier: Dark LLMs and the Rise of “AI-for-Evil”

Dark AI Is Becoming a New Weapon for Cybercriminals

What WormGPT 4 and KawaiiGPT Mean for Your Organization

Artificial Intelligence has transformed how businesses work, how developers build software, and how security teams defend systems. But in 2025, a disturbing shift is becoming clear. The same AI that boosts productivity is now being weaponized by cybercriminals.

Recent intelligence reports confirm that malicious AI tools like WormGPT 4 and KawaiiGPT are now being sold openly on underground platforms. These tools allow even unskilled attackers to launch powerful cyberattacks with ease. At Terra System Labs, we see this as one of the most dangerous evolutions in modern cybersecurity.

What Are WormGPT 4 and KawaiiGPT?

WormGPT 4

WormGPT was first discovered in 2023 and was eventually taken offline. In November 2025, a far more powerful version called WormGPT 4 resurfaced. It is now being sold on underground forums with monthly, yearly, and even lifetime access options.

Security researchers found that WormGPT 4 can:

- Generate highly realistic phishing and scam emails

- Create ransomware scripts and malicious PowerShell payloads

- Automate data theft, system compromise, and command execution

- Help attackers disguise their code to bypass detection tools

In testing, WormGPT 4 successfully generated fully functional ransomware capable of encrypting files and demanding payment. What once required deep malware expertise now takes only a few prompts.

KawaiiGPT

KawaiiGPT is even more dangerous in a different way. Unlike WormGPT, it is openly available on GitHub. Anyone with basic technical knowledge can download and run it.

KawaiiGPT can:

- Create spear-phishing campaigns

- Generate fake login pages

- Produce Python scripts for lateral movement

- Assist attackers with reconnaissance and exploitation

This means that cybercrime is no longer limited to skilled hackers. Students, insiders, and low-level attackers can now cause enterprise-scale damage.

Why This Is a Serious Turning Point in Cybersecurity

This new wave of dark AI tools removes the biggest barrier that once slowed attackers, which is technical skill. Malicious AI models now handle the thinking, scripting, and planning.

This creates three major problems for organizations:

- Attacks become faster and cheaper to run

- Phishing and malware become harder to distinguish from legitimate activity

- The number of active attackers increases rapidly

Earlier, poor grammar or sloppy code helped security teams detect low-effort attacks. Now, AI-generated phishing emails are professional, polished, and highly convincing. This makes traditional detection methods less effective.

What This Means for Businesses Today

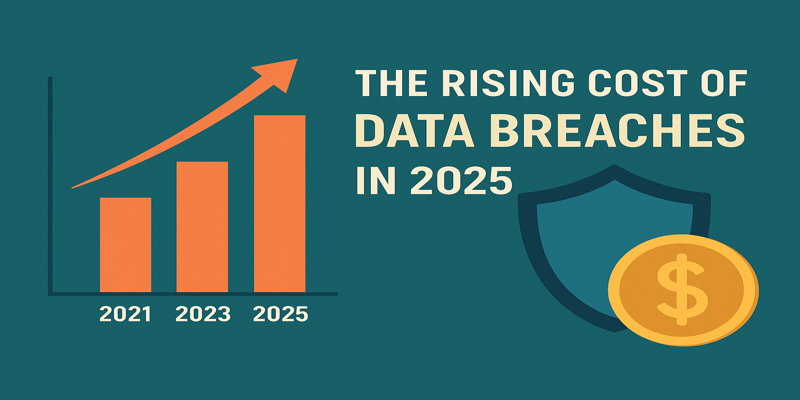

From banking to healthcare, from startups to global enterprises, every organization is now exposed to AI-powered cybercrime. The challenges are no longer future risks. They are active threats already being used in the real world.

Organizations now face:

- Higher phishing success rates

- Faster malware development cycles

- Automated exploitation at massive scale

- Lower cost for threat actors to operate

This makes prevention, detection, and response more critical than ever.

Why Proactive Security Is No Longer Optional

At Terra System Labs, our real-world testing shows that many organizations still rely on outdated threat models. The rise of malicious AI means defenses must evolve immediately.

Here is what modern security teams must prioritize now:

- Advanced penetration testing that simulates real attacker behavior

- Secure code review to eliminate exploitable logic flaws

- Continuous phishing and social engineering awareness training

- Red team exercises that include AI-driven attack simulations

- Clear policies for safe internal use of AI tools

Security can no longer be reactive. It must be proactive, test-driven, and continuously updated.

Terra System Labs Perspective

The rise of WormGPT 4 and KawaiiGPT confirms one critical truth. Cyber threats are no longer limited by human skill. They are now amplified by machines.

At Terra System Labs, we treat this shift as a call to strengthen security at every layer:

- From application source code to cloud infrastructure

- From employee awareness to attack simulation

- From compliance auditing to offensive security assessments

Our mission is to help organizations stay ahead of modern threats, not chase them after damage is done.

Final Thought

AI is not the enemy. Uncontrolled and weaponized AI is.

As dark AI tools continue to spread across underground markets, companies that delay proactive security will face higher risks, greater financial losses, and serious reputation damage.

Now is the time to test your defenses, train your people, and prepare your systems for the next generation of cyber threats.

Recent Posts